Projects

2014

Wearable Tech

Current Projects

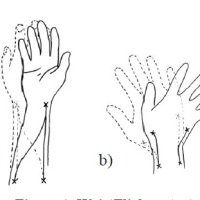

Embodied Game Controller

Grasp virtual objects. Use your knowledge of the real world to manipulate virtual objects

2013

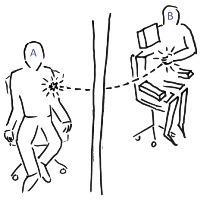

Mediated Touch: A Telepresence Study

Can I touch somebody who is at the other end of the world? If so what will it mean to us?

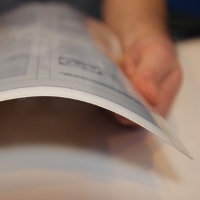

PaperTab

Combining Digital and Physical Media

2012

DisplayPointer

Seamless pointing interactions *with* displays, *between* displays.

WristFlicker

Wearable motion capture system

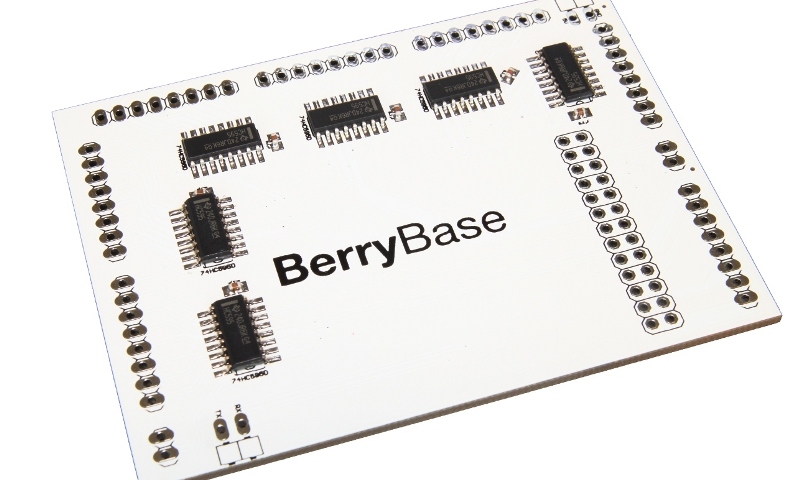

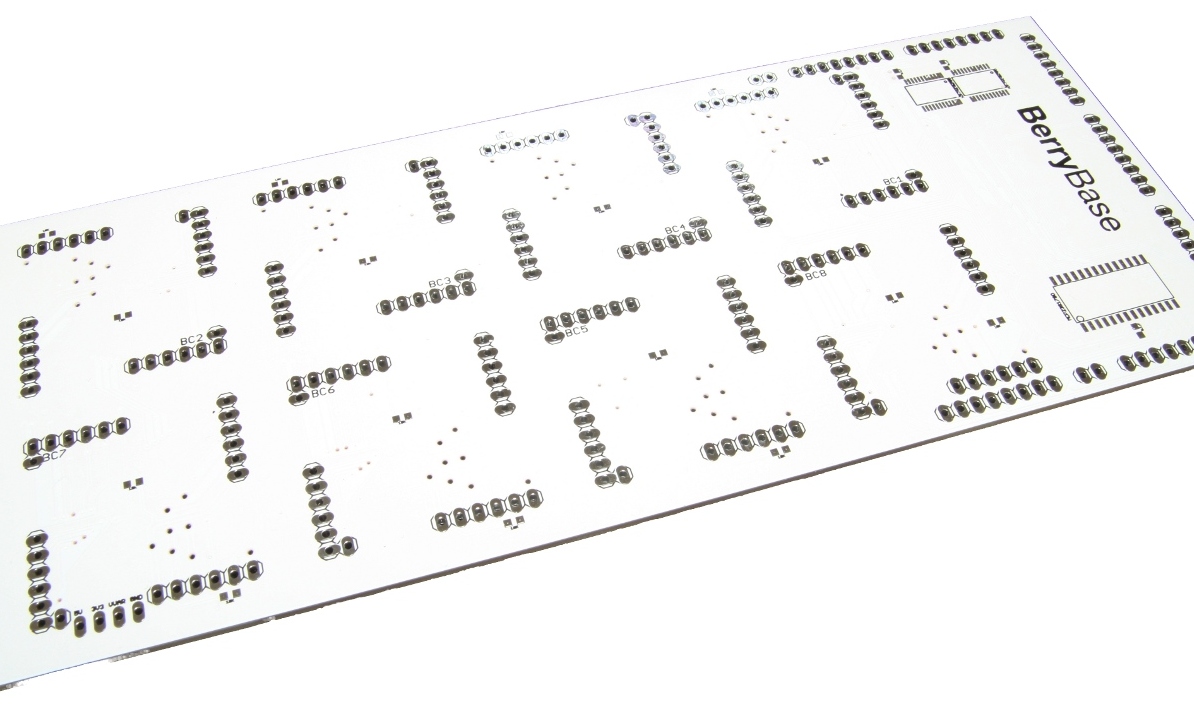

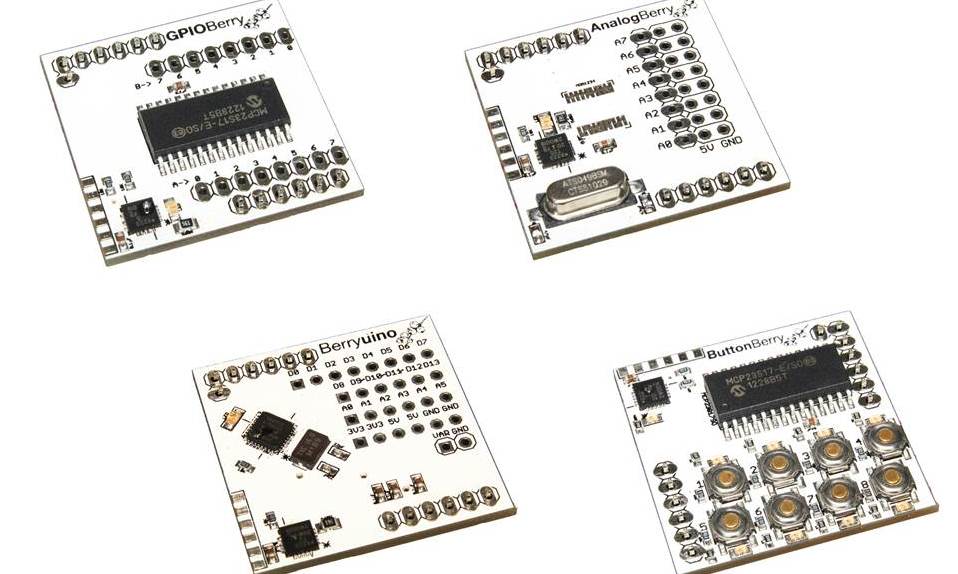

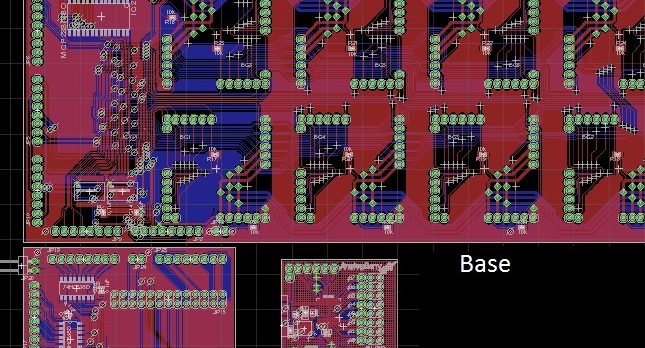

BerryBase

A plug'n'play Raspberry Pi sotware & hardware interface

2011

A Flock of Birds

Making Paper Fly

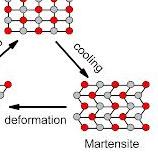

Shape Memory Alloys

Making things move

Re-apropreated Bicycle

The opposite of breaking things.